v0.1.0 · MIT

word2vec-rs

Production-grade Word2Vec in Rust — Skip-gram & CBOW with negative sampling, subsampling, linear LR decay, plots, and a CLI. Zero Python.

2

architectures

8

modules

45

tests

2

CLIs

API documentation

Full rustdoc — every module, struct, and function with inline examples and doc tests.

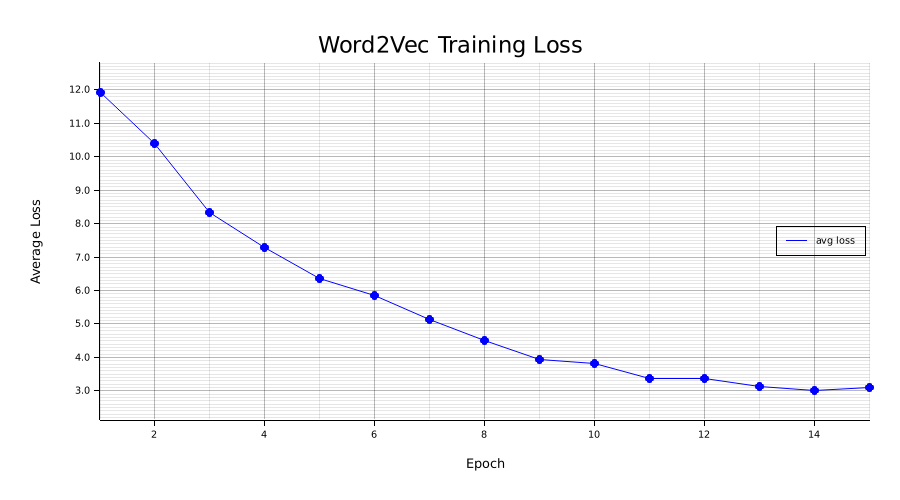

Training loss curve

Per-epoch average loss with linear LR decay from 0.025 → 0.0001.

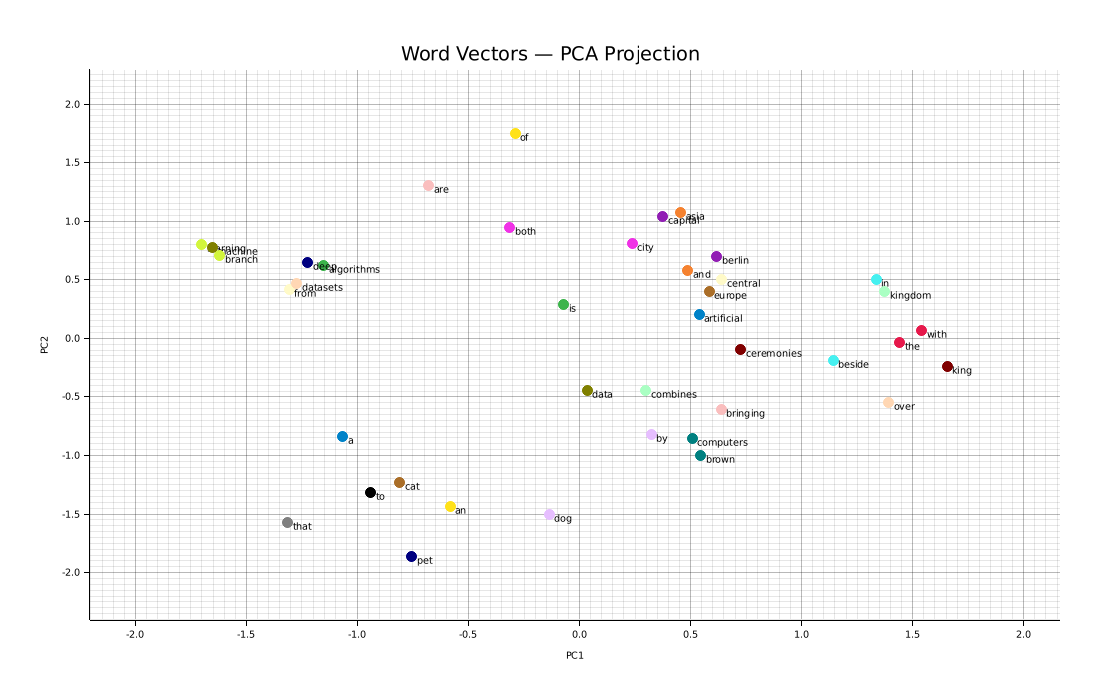

Word vector PCA

2D PCA projection of the top-50 vocabulary words after training.

Training history

JSON — loss, learning rate, pairs processed, and elapsed time per epoch.

Latest training run

Loss vs epoch — Skip-gram, dim=50, 15 epochs

PCA projection — top 50 words

Modules

error

Word2VecError — unified error type via thiserror

config

Config, ModelType — all hyperparameters with defaults

vocab

Frequency counts, min_count, subsampling, unigram noise table

model

Xavier init, skip-gram & CBOW SGD in-place update

trainer

Training loop, linear LR decay, progress bar, epoch history

embeddings

most_similar, similarity, analogy, save/load JSON + text

plot

Loss curve PNG + PCA scatter plot via plotters

bin/train

CLI — --input --output --dim --epochs --model --window